Numerically approximating Exp-Minus-Log is surprisingly easy.

Before we start.⌗

Last week has been quite eventful to me. I taught my first university lecture on information theory. I had to fight my E16 a bit to let me actually finish my slide deck and wrote a short blog post at 5:00 a.m. out of frustration. Then I went to sleep, and by the time I woke up I found out that I am in the news…

If you’re here looking for my work, keep searching. The E16 bug is definitely not as serious as the news outlets like to claim. But actual ground-breaking scientific work rarely breaks into mainstream, I’m genuinely as surprised as you are. I have done nothing to end up being famous for a window manager. It would be great if news sites reported as vividly on, for example, that I work on genomics and produced the best (Pareto-optimal) available digital genome storage solution or that I did some cool stuff when i was 16 or wrote a SoTA data compressor that is seemingly used by CERN and FitGirl Repacks (oh irony!) during my Abitur exams. But none of these stories sell.

I am also eternally grateful to many men who have been kind enough to spend their time bitching about me online for something I had no control over, to the weirdos who complain that I don’t spend my time getting pregnant or that I look ugly. Doing God’s job, really. I don’t need to be told that “one day I will get it” because the media reported on my 20 page bug fix. I probably deserve some punishment for the sins that I have committed in my life, but having to read the opinions of Polish people online is a disproportionate one. If you’re so smart, look at my things that actually can’t reasonably break into the mainstream. And if you’re also (regrettably) living in Poland and wondering why Poles abroad want - on average - nothing to do with you, imagine how my e-mail inbox looks like.

Anyway…

Introduction⌗

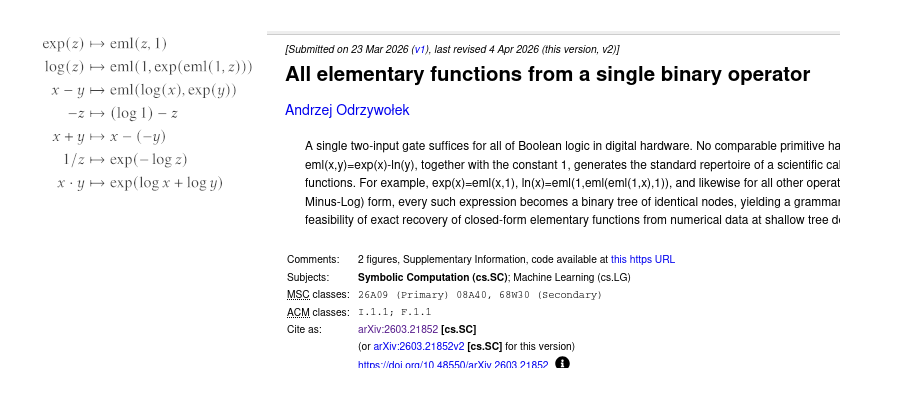

Andrzej proposes a curious operator, Exp-Minus-Log, defined as

Much ink has been spilled about it. You can probably read about this sensation online. But the core claim is that you can use EML to compute most elementary functions, so it could be a two-variable basis function for some type of a calculator.

This looks deceptively simple to compute and I can’t wait for people to tell me to pull libm and try subtracting the exps and logs. Not so simple. The C standard library typically gives you both to 1 ULP each, but the trouble is the word difference. When , the subtraction cancels most of the significant bits, and the relative error in the result can explode to thousands of ULPs. This is catastrophic cancellation, and it is the central enemy of this post.

I’ll walk you through some progressively cleverer implementations that I’ve looked into, benchmark them against __float128 reference values over 20mln random inputs, and explain the IEEE 754 and algebraic tricks that make the final version work.

All code in this article requires a C compiler with hardware FMA support (-march=native or -mfma), IEEE 754 binary64 arithmetic (essentially any modern CPU), and linking against -lm.

The Problem⌗

exp and log are each faithfully rounded by glibc/musl on x86-64: the returned double differs from the mathematical value by at most 1 ULP. So each operand carries at most relative error (mantissa bits).

Subtraction typically does not introduce new rounding error (Sterbenz’s lemma). However, it amplifies existing error. If and agree in their top bits, then , and the 1 ULP error in each operand becomes ULP in the result.

For , that is half a million ULP we get a result with only about 33 correct bits out of 53. Not very useful. Some would anyway write:

This is the baseline. It works perfectly when there is little cancellation, and catastrophically when there is. Over random pairs with , , measured against __float128:

| Metric | Value |

|---|---|

| ns/call | 12.4 |

| worst ULP | 851,150 |

| 0 ULP | 69.913% |

| below 1 ULP | 97.659% |

| below 2 ULP | 98.638% |

| above 1024 ULP | 673 (0.0034%) |

The vast majority of inputs are fine. But 1.4% of cases exceed 2 ULP, and the worst case has fewer than 33 correct bits.

We can start tackling the problem by reusing the fairly popular trick of double-double arithmetic. Such a number is a pair of IEEE 754 doubles satisfying , representing the unevaluated sum . This gives roughly 106 bits of significand, so enough headroom to survive 20+ bits of cancellation with precision to spare.

We have some key building blocks, all of which compile to a handful of instructions with FMA: Knuth’s 2Sum and Dekker’s 2Prod. Given , we produce such that exactly, or such that exactly.

So now the basic approach would be to compute and each as a double-double, subtract in extended precision and round once.

exp/log in double-double domain⌗

For , the arguments are reduced via , where . The reduction is carried out in double-double using FMA product residuals:

Then is approximated by an 8-term Estrin polynomial (serial depth 3 FMAs instead of 8 for Horner), with the tail kept as a double-double via two_prod:

The result is scaled by a 64-entry precomputed table (stored as double-double, bits each) and then by via IEEE exponent injection:

For , the input is decomposed as by extracting IEEE exponent bits, and is reduced via a 64-entry table . The kernel is an atanh series on where .

One critical subtlety is that the denominator must be kept as a double-double. Without this, its rounding error propagates through and dominates the result:

The final subtraction is a double-double subtract followed by a single rounding:

| Metric | Value |

|---|---|

| ns/call | 27.1 |

| worst ULP | 7 |

| 0 ULP | 99.999% |

| below 1 ULP | 100.000% |

| above 1024 ULP | 0 |

This is accurate but expensive: on my machine (Ryzen 7 PRO 7840U), about 2.2 times the naive (glibc) cost. It also costs us two 64-entry tables (2 KB total). The 7 ULP worst case comes from inputs with 19 bits of cancellation, right at the limit of our 74-bit intermediates.

The elegant solution⌗

The unspoken key insight to evaluating eml, from my experiments, seems to be the following. When , the identity

follows directly from , and therefore .

This is actually a really good way to tackle the problem, because expm1 by design handles the cancellation that exp(x) - 1 would otherwise suffer near zero. The problematic subtraction is absorbed into a dedicated function that already knows how to deal with it.

The identity reduces the problem to computing the argument accurately. When cancellation is severe, then of course , and both and are , so even a small error in gets amplified by .

This is why the slow path still needs double-double; we call log_dd(y) to get , then log_dd(t_h) to get , and reduce:

Then a Taylor-corrected expm1 on the double-double :

The remainder is , negligible. The final assembly uses FMA for an exact product residual:

Contrariwise, for of inputs, there is no significant cancellation. In this regime, the naive exp(x) - log(y) is already ULP. The guard condition is thus a single comparison after the subtract:

Hence, the full code is as follows:

Finally, the results are again very good:

| Metric | Value |

|---|---|

| ns/call | 21.4 |

| worst ULP | 2 |

| 0 ULP | 73.016% |

| below 1 ULP | 99.958% |

| below 2 ULP | 100.000% |

| above 1024 ULP | 0 |

A comparison of the methods tested:

| Naive | DD brute-force | new identity | |

|---|---|---|---|

| ns/call | 12.4 | 27.1 | 21.4 |

| worst ULP | 851,150 | 7 | 2 |

| tables | 0 | 2 KB (exp/log) | 1 KB (log only) |

| code | 1 line | ~200 lines | ~130 lines |

All benchmarks ran on x86-64 with glibc, GCC -O2 -march=native, measured against libquadmath’s 128-bit reference (authoritative source) over uniformly random pairs with and .